Understanding NLP with Deep Learning: Revolutionizing Language Processing

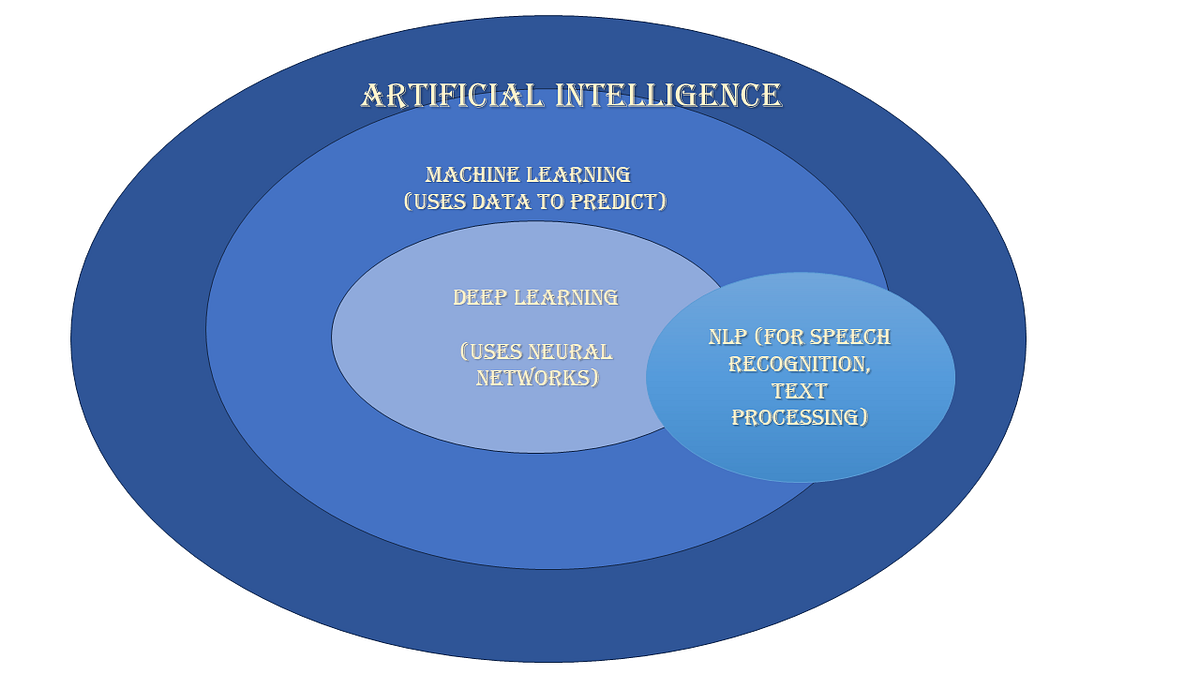

Natural Language Processing (NLP) is a fascinating field at the intersection of computer science, artificial intelligence, and linguistics. It focuses on the interaction between computers and humans through natural language. With the advent of deep learning, NLP has undergone a significant transformation, enabling machines to understand and generate human language with remarkable accuracy.

The Evolution of NLP

Traditionally, NLP relied on rule-based systems and statistical methods to process language. These approaches often required extensive manual feature engineering and were limited in their ability to handle complex language patterns. However, the introduction of deep learning has revolutionized this field by allowing models to automatically learn features from vast amounts of data.

Deep Learning in NLP

Deep learning models, particularly neural networks, have become the backbone of modern NLP systems. These models are capable of handling large datasets and learning intricate patterns within them. Some key architectures used in deep learning for NLP include:

- Recurrent Neural Networks (RNNs): RNNs are designed for sequence data and are particularly useful for tasks like language modeling and translation due to their ability to maintain context across sequences.

- Long Short-Term Memory (LSTM) Networks: A specialized form of RNNs, LSTMs address the vanishing gradient problem by maintaining information over longer sequences.

- Convolutional Neural Networks (CNNs): Originally developed for image processing, CNNs have been adapted for text classification tasks by treating text as a one-dimensional image.

- Transformers: Transformers have become a game-changer in NLP due to their parallel processing capabilities and attention mechanisms that allow models to focus on relevant parts of input data. The BERT model is a prominent example that leverages transformers for various NLP tasks.

NLP Applications Enhanced by Deep Learning

The integration of deep learning into NLP has led to significant advancements across several applications:

- Machine Translation: Deep learning models have drastically improved translation quality by capturing contextual nuances better than previous methods.

- Sentiment Analysis: Businesses use sentiment analysis powered by deep learning to gauge public opinion from social media or customer reviews with high accuracy.

- Chatbots and Virtual Assistants: These systems can now understand context better and provide more human-like interactions thanks to advancements in deep learning.

- Text Summarization: Automated summarization tools use deep learning to extract essential information from large texts efficiently.

The Future of NLP with Deep Learning

The future holds promising developments as researchers continue exploring more sophisticated models like GPT-3 and beyond. These models aim not only at improving accuracy but also at understanding subtleties such as sarcasm or cultural references within texts.

The combination of vast datasets, powerful computing resources, and innovative algorithms will likely lead us toward even more advanced language processing capabilities that could redefine how we interact with technology daily.

Conclusion

NLP with deep learning represents an exciting frontier in technology where machines can comprehend human languages almost as well as humans do themselves. As these technologies evolve further, they promise not only enhanced communication but also new possibilities across industries worldwide—making it an exhilarating area worth watching closely!

Understanding NLP with Deep Learning: Key Concepts, Benefits, and Applications

- What is Natural Language Processing (NLP) and how does it relate to deep learning?

- What are the key benefits of using deep learning in NLP applications?

- How do recurrent neural networks (RNNs) play a role in deep learning for NLP?

- Can you explain the significance of transformers in modern NLP models?

- What are some popular deep learning frameworks used for NLP tasks?

- How has deep learning improved machine translation compared to traditional methods?

- What challenges are associated with training deep learning models for NLP tasks?

- Are there any ethical considerations related to using deep learning in NLP applications?

- How can businesses leverage NLP with deep learning to enhance customer experience?

What is Natural Language Processing (NLP) and how does it relate to deep learning?

Natural Language Processing (NLP) is a branch of artificial intelligence that focuses on enabling computers to understand, interpret, and generate human language in a way that is both meaningful and contextually relevant. NLP encompasses a wide range of tasks, including text classification, sentiment analysis, machine translation, and speech recognition. Deep learning plays a crucial role in advancing NLP by providing powerful tools and algorithms that can automatically learn intricate patterns in language data. Deep learning models such as recurrent neural networks (RNNs), transformers, and convolutional neural networks (CNNs) have significantly enhanced the accuracy and efficiency of NLP systems, allowing for more nuanced language processing capabilities than traditional rule-based approaches. In essence, deep learning revolutionizes NLP by enabling machines to process language in a manner that closely mimics human understanding and communication.

What are the key benefits of using deep learning in NLP applications?

The key benefits of using deep learning in Natural Language Processing (NLP) applications lie in its ability to significantly enhance the accuracy and efficiency of language processing tasks. Deep learning models, such as neural networks and transformers, can automatically learn intricate patterns and features from vast amounts of data, enabling them to better understand and generate human language. These models excel at capturing contextual nuances, handling complex language structures, and improving the overall performance of NLP systems. By leveraging deep learning techniques, NLP applications can achieve higher levels of precision in tasks like machine translation, sentiment analysis, chatbots, and text summarization, ultimately leading to more effective communication and interaction between humans and machines.

How do recurrent neural networks (RNNs) play a role in deep learning for NLP?

Recurrent Neural Networks (RNNs) play a crucial role in deep learning for Natural Language Processing (NLP) by addressing the sequential nature of language data. Unlike traditional neural networks, RNNs are designed to process sequential data by maintaining memory of past inputs, allowing them to capture contextual dependencies within a sequence of words. This ability makes RNNs well-suited for tasks like language modeling, speech recognition, and machine translation, where understanding the order of words is essential. Despite their effectiveness in capturing short-term dependencies, RNNs can struggle with long-range dependencies due to vanishing or exploding gradient issues. Nevertheless, variations such as Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) have been developed to mitigate these challenges and enhance the performance of RNNs in NLP tasks.

Can you explain the significance of transformers in modern NLP models?

The significance of transformers in modern NLP models lies in their revolutionary architecture that has transformed the way machines process language. Unlike traditional sequence models, transformers leverage attention mechanisms to capture long-range dependencies and context within text efficiently. This capability allows transformers to handle large datasets and complex language patterns with remarkable accuracy, making them ideal for a wide range of NLP tasks. By enabling parallel processing and focusing on relevant parts of input data, transformers have greatly improved tasks such as machine translation, sentiment analysis, and text generation, pushing the boundaries of what is achievable in natural language processing with deep learning.

What are some popular deep learning frameworks used for NLP tasks?

When it comes to implementing NLP tasks using deep learning, several popular frameworks stand out due to their robust features and community support. TensorFlow, developed by Google, is widely used for its flexibility and scalability, making it ideal for building complex models. PyTorch, favored for its dynamic computation graph and ease of use, is particularly popular among researchers and developers who prefer an intuitive approach to model building. Hugging Face Transformers is another essential tool in the NLP community, offering pre-trained models like BERT and GPT that can be fine-tuned for various language tasks. Additionally, Keras provides a high-level API that simplifies the creation of neural networks, making it accessible for beginners while still powerful enough for advanced applications. Each of these frameworks offers unique advantages that cater to different preferences and project requirements in the field of NLP with deep learning.

How has deep learning improved machine translation compared to traditional methods?

One frequently asked question regarding NLP with deep learning is how deep learning has enhanced machine translation compared to traditional methods. Deep learning has revolutionized machine translation by enabling models to automatically learn complex language patterns from vast amounts of data, eliminating the need for manual feature engineering. Traditional methods often struggled with capturing contextual nuances and handling various language structures effectively. In contrast, deep learning models, such as transformers like BERT, leverage attention mechanisms to focus on relevant parts of input data and maintain context across sequences more efficiently. This results in significantly improved translation quality, better capturing the subtleties and nuances of languages, ultimately leading to more accurate and natural translations than ever before.

What challenges are associated with training deep learning models for NLP tasks?

Training deep learning models for NLP tasks comes with several challenges that researchers and practitioners often encounter. One significant challenge is the need for large amounts of labeled data to train these models effectively. Acquiring and annotating such datasets can be time-consuming and costly. Additionally, deep learning models for NLP are computationally intensive, requiring substantial computing power and memory resources to train efficiently. Another challenge is the potential for overfitting, where the model performs well on the training data but fails to generalize to unseen data. Balancing model complexity and generalization capabilities is crucial in overcoming this challenge. Furthermore, fine-tuning hyperparameters and optimizing model architectures can also pose challenges in achieving optimal performance for specific NLP tasks. Addressing these challenges requires a combination of expertise in deep learning, NLP domain knowledge, and careful experimentation to develop robust and effective solutions.

Are there any ethical considerations related to using deep learning in NLP applications?

The integration of deep learning in NLP applications raises important ethical considerations that must be carefully addressed. One key concern is the potential for bias in the data used to train these models, which can lead to discriminatory outcomes, especially in sensitive areas like hiring or criminal justice. Additionally, the opacity of deep learning models poses challenges in understanding how decisions are made, making it difficult to ensure accountability and transparency. Privacy issues also arise as these models may inadvertently expose personal information during language processing tasks. As we harness the power of deep learning in NLP, it is crucial to prioritize ethical practices, promote diversity in dataset curation, and establish clear guidelines for responsible deployment to mitigate these ethical challenges effectively.

How can businesses leverage NLP with deep learning to enhance customer experience?

Businesses can leverage NLP with deep learning to enhance customer experience in various ways. By implementing advanced NLP models, businesses can analyze customer feedback, sentiment, and interactions more effectively to gain valuable insights into customer preferences and behaviors. This enables personalized recommendations, targeted marketing campaigns, and improved customer support through chatbots that can understand and respond to customer queries with greater accuracy and efficiency. Additionally, NLP-powered systems can help businesses automate tasks like email responses or social media engagement, allowing for faster response times and better overall customer satisfaction. Ultimately, integrating NLP with deep learning technologies empowers businesses to create a more seamless and tailored customer experience that fosters loyalty and drives growth.