Understanding Natural Language Processing (NLP) Concepts

Natural Language Processing (NLP) is a branch of artificial intelligence that focuses on the interaction between computers and human language. It involves the development of algorithms and models that enable computers to understand, interpret, and generate human language in a way that is both meaningful and useful.

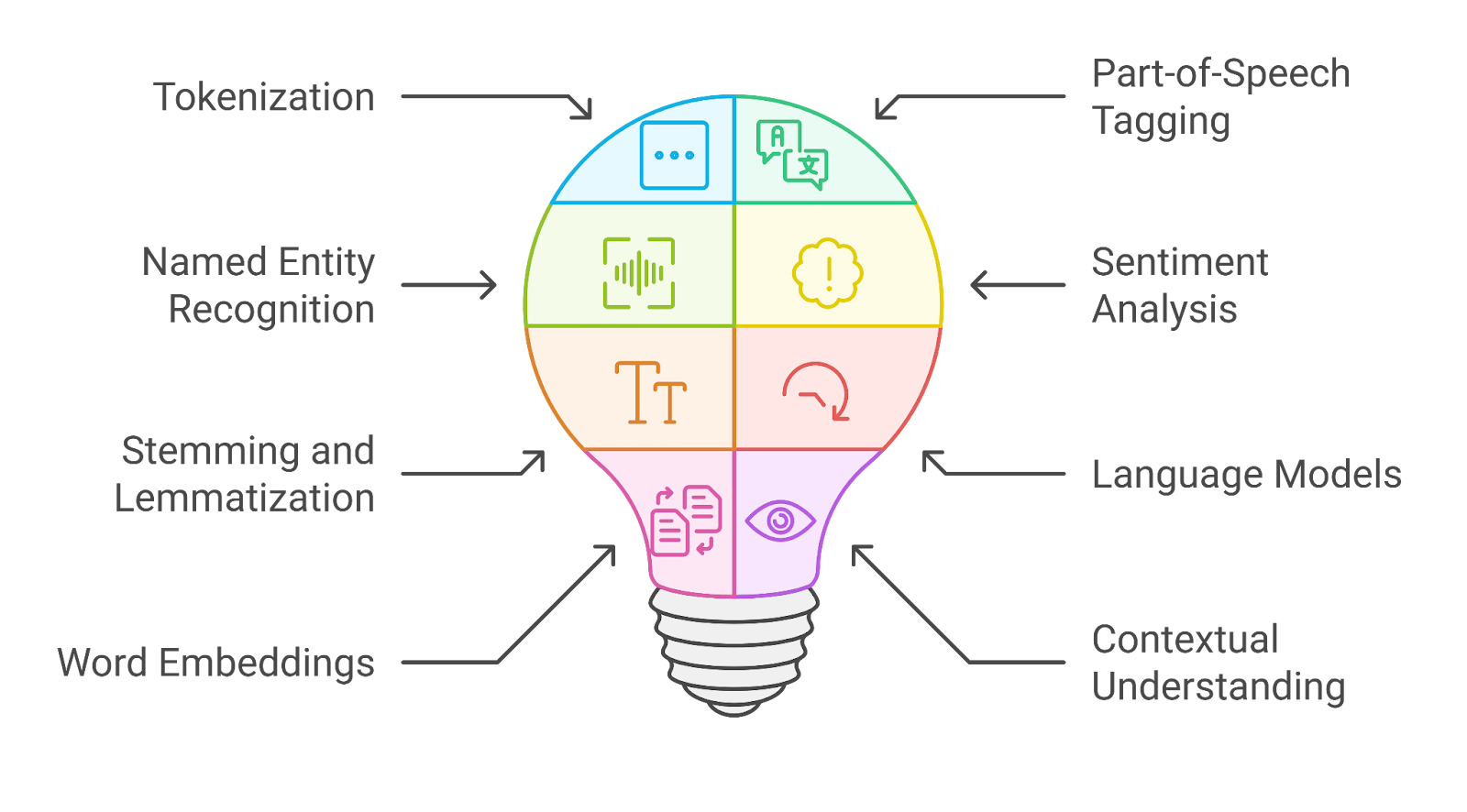

Key Concepts in NLP:

Tokenization: Tokenization is the process of breaking down text into smaller units, such as words or sentences. This allows the computer to analyze and process text more effectively.

Part-of-Speech Tagging: Part-of-speech tagging involves labeling words in a text with their corresponding part of speech, such as noun, verb, adjective, etc. This helps computers understand the grammatical structure of sentences.

Named Entity Recognition: Named Entity Recognition (NER) is the task of identifying and classifying named entities in text, such as names of people, organizations, locations, etc. This is important for tasks like information extraction and sentiment analysis.

Sentiment Analysis: Sentiment analysis involves determining the sentiment or emotion expressed in a piece of text. NLP models can be trained to classify text as positive, negative, or neutral based on the language used.

Machine Translation: Machine translation is the task of automatically translating text from one language to another using NLP techniques. This has led to significant advancements in cross-language communication and understanding.

Text Generation: Text generation involves creating new pieces of text based on input data or prompts. NLP models can be used to generate realistic-sounding text for various applications like chatbots, content creation, and more.

NLP continues to evolve rapidly with advancements in deep learning and neural network architectures. As researchers and developers continue to push the boundaries of what is possible with NLP technology, we can expect even more exciting applications and capabilities in the future.

Understanding NLP: Key Concepts, Components, and Frequently Asked Questions

- What are the 5 components of NLP?

- What are the 5 steps in NLP?

- What are the 4 elements of NLP?

- Is Chatgpt NLP or LLM?

- What are the 7 layers of NLP?

- What are NLP concepts?

- What are the 4 pillars of NLP?

What are the 5 components of NLP?

Natural Language Processing (NLP) comprises five key components that are essential for understanding and processing human language effectively. These components include tokenization, part-of-speech tagging, named entity recognition, sentiment analysis, and machine translation. Tokenization involves breaking down text into smaller units for analysis, while part-of-speech tagging assigns grammatical labels to words. Named entity recognition identifies and classifies entities like names and locations in text, and sentiment analysis determines the emotional tone of the text. Lastly, machine translation enables the automatic translation of text between different languages using NLP techniques. These components work together to enhance the capabilities of NLP systems in various applications and domains.

What are the 5 steps in NLP?

In Natural Language Processing (NLP), the 5 key steps typically involved in processing and analyzing text data are: Tokenization, Part-of-Speech Tagging, Named Entity Recognition, Sentiment Analysis, and Text Generation. These steps play crucial roles in enabling computers to understand and work with human language effectively. Tokenization breaks down text into smaller units for analysis, Part-of-Speech Tagging labels words with their grammatical categories, Named Entity Recognition identifies entities like names and locations, Sentiment Analysis determines the emotional tone of text, and Text Generation creates new text based on input data. Mastering these fundamental steps is essential for developing robust NLP models and applications.

What are the 4 elements of NLP?

In the realm of Natural Language Processing (NLP), the four fundamental elements that form the core of its functionality are tokenization, part-of-speech tagging, named entity recognition, and sentiment analysis. Tokenization involves breaking down text into smaller units for analysis, while part-of-speech tagging assigns grammatical labels to words. Named entity recognition identifies and categorizes named entities in text, such as names and locations. Lastly, sentiment analysis determines the emotional tone or polarity of text, aiding in understanding user sentiment and opinion. These four elements play crucial roles in enabling computers to effectively process and understand human language data in various NLP applications.

Is Chatgpt NLP or LLM?

ChatGPT is an example of a language model that utilizes concepts from both Natural Language Processing (NLP) and Large Language Models (LLMs). NLP encompasses a wide range of techniques and methodologies aimed at enabling computers to understand, interpret, and generate human language. LLMs, on the other hand, are a specific category of models within NLP that are designed to handle vast amounts of text data and generate coherent and contextually relevant responses. ChatGPT falls under the category of LLMs because it is built upon advanced neural network architectures that have been trained on extensive datasets to predict and generate text. While it leverages NLP techniques such as tokenization and sentiment analysis, its core functionality as a conversational agent is driven by its capabilities as an LLM.

What are the 7 layers of NLP?

In the realm of Natural Language Processing (NLP), the concept of the 7 layers refers to the different levels of linguistic analysis that NLP models typically go through to understand and process human language. These layers include tokenization, part-of-speech tagging, named entity recognition, parsing, semantic analysis, discourse integration, and pragmatics. Each layer plays a crucial role in breaking down and interpreting text at various levels of complexity, ultimately enabling NLP systems to extract meaning and insights from language data effectively.

What are NLP concepts?

When people ask about NLP concepts, they are typically referring to the fundamental principles and techniques used in Natural Language Processing (NLP). NLP concepts encompass a wide range of topics, including tokenization, part-of-speech tagging, named entity recognition, sentiment analysis, machine translation, and text generation. These concepts are essential for enabling computers to understand and process human language in a meaningful way. By delving into NLP concepts, individuals can gain a deeper understanding of how language is analyzed and interpreted by machines, leading to the development of innovative applications and solutions that enhance communication and interaction between humans and computers.

What are the 4 pillars of NLP?

The four pillars of Natural Language Processing (NLP) encompass key foundational concepts that form the basis of understanding and processing human language by computers. These pillars include tokenization, part-of-speech tagging, named entity recognition, and sentiment analysis. Tokenization involves breaking down text into smaller units for analysis, while part-of-speech tagging assigns grammatical labels to words. Named entity recognition identifies and classifies named entities in text, such as names of people or organizations. Sentiment analysis determines the sentiment expressed in text, enabling computers to gauge emotions conveyed in language. These pillars collectively contribute to the robustness and effectiveness of NLP systems in various applications and domains.