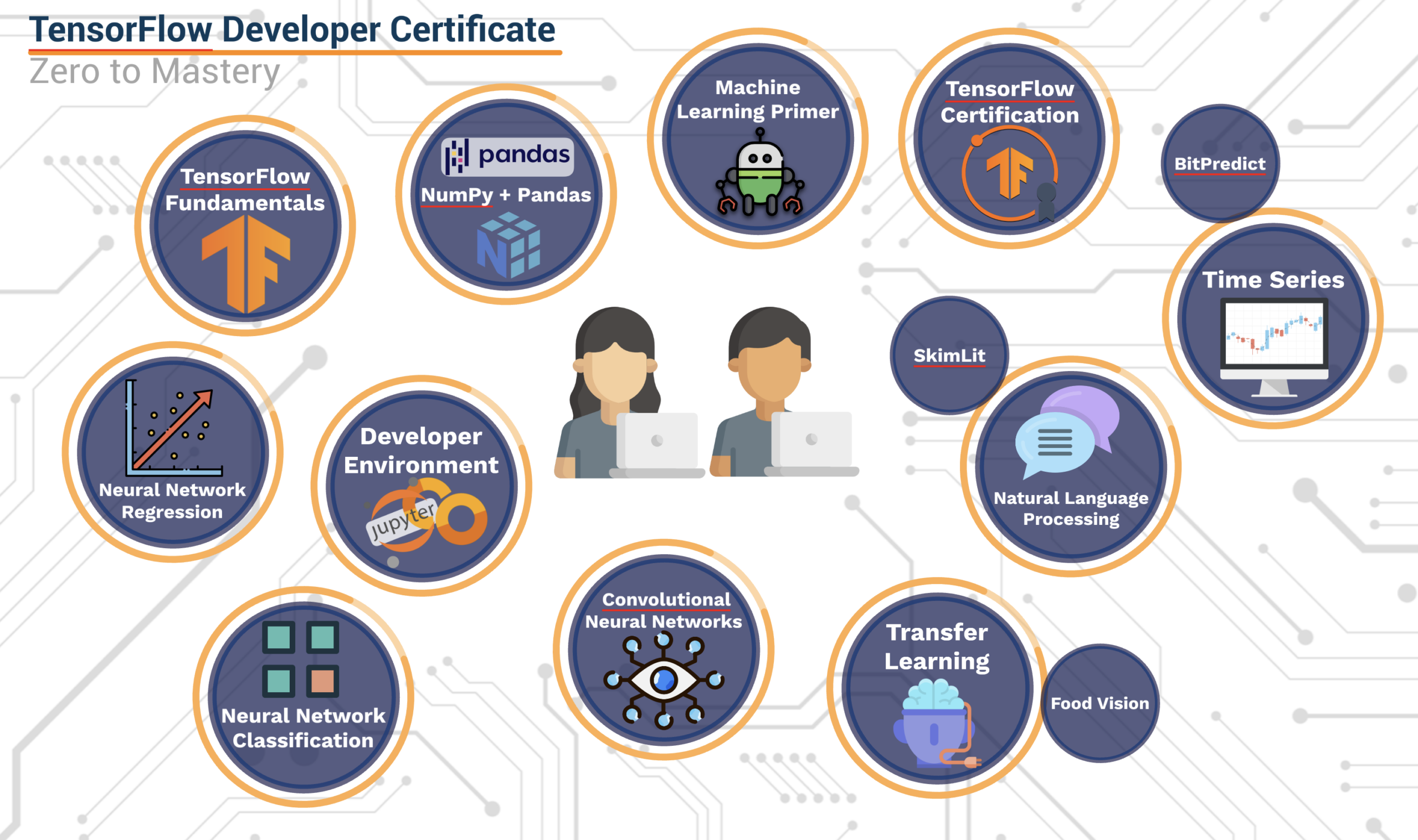

Exploring Deep Learning with TensorFlow

Deep learning has revolutionized the field of artificial intelligence, enabling machines to perform tasks that were once thought to be exclusive to humans. At the forefront of this technological advancement is TensorFlow, an open-source machine learning framework developed by the Google Brain team. TensorFlow has become a popular choice among researchers and developers for building and deploying deep learning models.

What is TensorFlow?

TensorFlow is a comprehensive and flexible platform for building machine learning models. It provides a collection of tools, libraries, and community resources that allow developers to easily construct and train machine learning models. The framework supports both CPU and GPU computing devices, which makes it highly efficient for training complex neural networks.

Key Features of TensorFlow

- Scalability: TensorFlow can scale from running on a single device to thousands of machines in a distributed system, making it suitable for both small-scale experiments and large-scale deployments.

- Ecosystem: With an extensive ecosystem that includes libraries such as Keras for high-level neural network APIs, TensorBoard for visualization, and TensorFlow Lite for mobile deployment, developers have access to a wide range of tools to enhance their projects.

- Flexibility: TensorFlow’s architecture allows users to choose between eager execution (imperative programming) or graph execution (declarative programming), offering flexibility in how models are built and trained.

- Community Support: As an open-source project with contributions from thousands of developers worldwide, TensorFlow benefits from continuous improvement and robust community support.

Deep Learning with TensorFlow

TensorFlow is designed with deep learning in mind. It provides pre-built functions and operations that simplify the process of creating complex neural networks. Here’s how deep learning can be implemented using TensorFlow:

- Data Preparation: Before training a model, data must be collected and preprocessed. This involves normalizing inputs, augmenting datasets, or converting data into tensors—a fundamental component in TensorFlow that represents multi-dimensional arrays.

- Model Building: Using layers provided by Keras or custom operations in low-level APIs, developers can construct neural network architectures tailored to specific tasks such as image recognition or natural language processing.

- Training: With the model defined, it can be trained using backpropagation algorithms provided by TensorFlow’s optimization modules. Users can specify hyperparameters such as learning rate or batch size to fine-tune performance.

- Evaluation & Deployment: After training is complete, models are evaluated on test datasets to ensure accuracy. Successful models can then be deployed across various platforms using tools like TensorFlow Serving or exported as lightweight versions with TensorFlow Lite.

The Future of Deep Learning with TensorFlow

TensorFlow continues to evolve rapidly with each new release bringing enhanced capabilities and optimizations. As AI technology advances further into areas like reinforcement learning and unsupervised learning techniques—Tensorflow remains poised as one of its leading platforms due largely thanks not only due its powerful features but also because ongoing collaboration within global developer communities who contribute towards its development daily ensuring continued innovation well into future applications yet imagined today!

Together these factors make exploring deep-learning projects via this versatile toolkit accessible even those just starting out while still providing seasoned professionals ample room grow expand upon existing knowledge bases alike – ultimately paving way unprecedented breakthroughs across myriad industries worldwide!

9 Essential Tips for Mastering Deep Learning with TensorFlow

- Start with the basics of deep learning before diving into TensorFlow.

- Understand the architecture of neural networks and how they work in TensorFlow.

- Experiment with different network structures and hyperparameters to optimize performance.

- Utilize pre-trained models available in TensorFlow for transfer learning.

- Regularly visualize your model’s performance using TensorBoard.

- Implement early stopping to prevent overfitting during training.

- Utilize GPU support in TensorFlow for faster training on large datasets.

- Explore various loss functions and optimizers to improve model accuracy.

- Stay updated with the latest TensorFlow updates and best practices.

Start with the basics of deep learning before diving into TensorFlow.

It is essential to begin by mastering the fundamentals of deep learning before delving into the intricacies of TensorFlow. Understanding the core concepts and principles of neural networks, activation functions, loss functions, and optimization algorithms lays a solid foundation for effectively utilizing TensorFlow to build and train sophisticated machine learning models. By grasping the basics first, individuals can develop a strong understanding of how deep learning works, enabling them to leverage TensorFlow’s capabilities more efficiently and effectively in their projects.

Understand the architecture of neural networks and how they work in TensorFlow.

To effectively harness the power of TensorFlow for deep learning, it is crucial to grasp the architecture of neural networks and comprehend how they operate within the framework. Neural networks consist of interconnected layers of nodes that process and transform input data to generate meaningful outputs. Understanding the intricate workings of these layers, including activation functions, loss functions, and optimization algorithms, is essential for building and training robust models in TensorFlow. By delving into the nuances of neural network architecture and its implementation in TensorFlow, developers can unlock the full potential of this powerful machine learning framework to tackle complex real-world challenges with precision and efficiency.

Experiment with different network structures and hyperparameters to optimize performance.

Experimenting with different network structures and hyperparameters is crucial for optimizing the performance of deep learning models in TensorFlow. The architecture of a neural network, including the number of layers and neurons, can significantly impact how well the model learns from data. By trying various configurations, developers can identify which structures best capture the underlying patterns in their specific datasets. Additionally, adjusting hyperparameters such as learning rate, batch size, and dropout rates can further enhance model accuracy and efficiency. These parameters control how the learning process unfolds and can prevent issues like overfitting or underfitting. Through systematic experimentation and tuning, developers can achieve more robust and accurate models tailored to their unique applications.

Utilize pre-trained models available in TensorFlow for transfer learning.

By utilizing pre-trained models that are readily available in TensorFlow for transfer learning, developers can leverage the knowledge and features learned from large datasets to enhance the performance of their own machine learning models. Transfer learning allows for the transfer of learned patterns and representations from one task to another, enabling faster training and improved accuracy, especially when working with limited data. By incorporating pre-trained models into their projects, developers can save time and resources while achieving better results in tasks such as image recognition, natural language processing, and more.

Regularly visualize your model’s performance using TensorBoard.

Regularly visualizing your model’s performance using TensorBoard is a crucial tip in TensorFlow deep learning. By utilizing TensorBoard, you can gain valuable insights into how your model is training and performing over time. Visualizations such as loss curves, accuracy metrics, and layer activations can help you identify potential issues, track improvements, and make informed decisions on optimizing your model. Monitoring these visualizations allows you to fine-tune your deep learning model effectively and ultimately achieve better results in your machine learning tasks.

Implement early stopping to prevent overfitting during training.

Implementing early stopping is a crucial technique in TensorFlow deep learning to prevent overfitting during model training. By monitoring the model’s performance on a separate validation dataset and stopping the training process when the validation loss starts to increase, early stopping helps ensure that the model generalizes well to unseen data. This strategy not only saves computational resources but also improves the model’s ability to make accurate predictions on new data by preventing it from memorizing noise or outliers in the training set. Integrating early stopping into the training process can lead to more robust and effective deep learning models in TensorFlow.

Utilize GPU support in TensorFlow for faster training on large datasets.

To optimize training performance when working with large datasets in TensorFlow deep learning projects, it is recommended to leverage GPU support. By utilizing the computational power of GPUs, tasks such as matrix operations and neural network training can be significantly accelerated, leading to faster model convergence and reduced training times. This efficient use of GPU resources allows researchers and developers to iterate more quickly on their models, experiment with different architectures, and ultimately improve the overall efficiency and effectiveness of their deep learning workflows.

Explore various loss functions and optimizers to improve model accuracy.

To enhance the accuracy of your TensorFlow deep learning model, it is essential to explore a variety of loss functions and optimizers. Loss functions are crucial in measuring the disparity between predicted and actual values, guiding the model towards better performance. By experimenting with different loss functions such as mean squared error, cross-entropy, or hinge loss, you can determine which one best suits your specific task. Additionally, optimizing algorithms like stochastic gradient descent, Adam, or RMSprop play a significant role in adjusting model parameters to minimize the loss function. Through systematic exploration and selection of appropriate loss functions and optimizers, you can significantly boost the accuracy and efficiency of your deep learning models in TensorFlow.

Stay updated with the latest TensorFlow updates and best practices.

To maximize your success in TensorFlow deep learning projects, it is crucial to stay updated with the latest TensorFlow updates and best practices. The field of deep learning is constantly evolving, with new techniques, tools, and optimizations being introduced regularly. By staying informed about the latest advancements in TensorFlow, you can ensure that your models are leveraging the most efficient algorithms and methodologies available. Additionally, keeping up with best practices in TensorFlow usage can help you optimize your workflow, improve model performance, and stay ahead of the curve in the rapidly changing landscape of deep learning technology.