AI and Deep Learning: Transforming the Future

Artificial Intelligence (AI) and deep learning are two of the most transformative technologies of our time. They are reshaping industries, enhancing human capabilities, and driving innovation across various sectors. In this article, we explore what AI and deep learning are, how they work, and their impact on our world.

Understanding AI and Deep Learning

Artificial Intelligence refers to the simulation of human intelligence in machines that are programmed to think like humans and mimic their actions. It encompasses a wide range of technologies, including machine learning, natural language processing, robotics, and more.

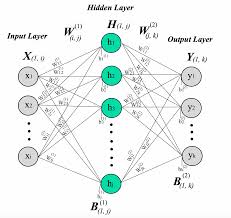

Deep Learning, a subset of machine learning, involves neural networks with three or more layers. These neural networks attempt to simulate the behavior of the human brain—albeit far from matching its ability—allowing it to “learn” from large amounts of data. Deep learning is what powers many AI applications today.

How Deep Learning Works

Deep learning models are based on artificial neural networks that contain layers of nodes or “neurons.” Each neuron processes input data using weights that adjust as learning proceeds. The model learns by adjusting these weights based on errors in predictions during training.

This process involves several steps:

- Data Collection: Gathering a large dataset relevant to the problem at hand.

- Training: Feeding data into the neural network so it can learn patterns and features.

- Tuning: Adjusting parameters for improved accuracy.

- Testing: Evaluating the model’s performance on unseen data.

The Impact of AI and Deep Learning

The impact of AI and deep learning is profound across multiple domains:

- Healthcare: AI systems assist in diagnosing diseases earlier than traditional methods by analyzing medical images with high accuracy.

- Agriculture: Farmers use AI-driven insights for crop monitoring, pest control, and yield prediction to enhance productivity.

- Finance: Financial institutions leverage AI for fraud detection, risk management, and personalized banking experiences.

- Automotive: Autonomous vehicles rely heavily on deep learning algorithms for navigation and safety features.

The Future of AI and Deep Learning

The future holds immense potential as these technologies continue to evolve. Key areas likely to see significant advancements include natural language understanding, real-time translation services, personalized education tools, advanced robotics in manufacturing processes, among others.

The ethical considerations surrounding privacy concerns will be crucial as society navigates this new era powered by intelligent systems capable not only performing tasks but also making decisions autonomously under certain conditions . As such governance frameworks must evolve alongside technological progress ensuring responsible use while maximizing benefits offered through artificial intelligence & associated disciplines like deep-learning .

Conclusion

In conclusion , both AI & its subset discipline -deep-learning- represent powerful forces shaping modern-day living impacting everything from daily conveniences right up strategic decision-making within corporate boardrooms worldwide . While challenges remain particularly around ethics , transparency accountability ; opportunities abound those willing embrace change ushered forth via these groundbreaking innovations .

7 Essential Tips for Mastering AI and Deep Learning

- Understand the basics of machine learning before diving into deep learning.

- Choose the right framework and tools based on your project requirements.

- Preprocess and clean your data to improve model performance.

- Regularly update and fine-tune your deep learning models for better results.

- Experiment with different architectures to find the most suitable one for your task.

- Be mindful of overfitting and use techniques like dropout and regularization to combat it.

- Stay updated with the latest research and advancements in AI and deep learning.

Understand the basics of machine learning before diving into deep learning.

Before diving into deep learning, it’s crucial to understand the basics of machine learning, as it forms the foundation for more advanced concepts. Machine learning involves training algorithms to make predictions or decisions based on data, and it encompasses a variety of techniques such as supervised and unsupervised learning. By grasping these fundamental principles, one can better appreciate how deep learning builds upon them with its use of neural networks to model complex patterns and relationships. This foundational knowledge not only aids in comprehending how deep learning models are structured and trained but also enhances the ability to troubleshoot issues and optimize performance effectively.

Choose the right framework and tools based on your project requirements.

When embarking on a project involving AI and deep learning, selecting the right framework and tools is crucial to its success. Different frameworks offer various strengths and features, so understanding your project’s specific requirements will guide your choice. For instance, TensorFlow is renowned for its flexibility and scalability, making it ideal for large-scale projects that require complex model deployment. On the other hand, PyTorch is favored for its ease of use and dynamic computation graph, which is beneficial for research-focused applications where experimentation is key. Additionally, consider the community support and available resources for each tool, as these can significantly impact development efficiency. By aligning your toolset with your project’s goals and constraints, you can optimize performance and streamline the development process.

Preprocess and clean your data to improve model performance.

Preprocessing and cleaning your data is a crucial step in enhancing the performance of AI and deep learning models. Raw data often contains noise, inconsistencies, and irrelevant information that can hinder the learning process and lead to inaccurate predictions. By thoroughly cleaning the data—removing duplicates, handling missing values, normalizing scales, and encoding categorical variables—you ensure that the model receives high-quality input. This not only improves the model’s accuracy but also reduces training time by allowing it to focus on learning meaningful patterns rather than dealing with extraneous noise. Properly preprocessed data lays a strong foundation for building robust and efficient AI systems capable of delivering reliable results.

Regularly update and fine-tune your deep learning models for better results.

Regularly updating and fine-tuning deep learning models is crucial for maintaining their accuracy and effectiveness. As new data becomes available, incorporating it into the training process ensures that models remain relevant and can adapt to changing patterns or trends. Fine-tuning involves adjusting model parameters and architectures to optimize performance, which can lead to more accurate predictions and better generalization to unseen data. By consistently refining models, organizations can enhance their AI systems’ capabilities, ensuring they deliver optimal results in dynamic environments. This proactive approach not only improves model performance but also extends its longevity in providing valuable insights.

Experiment with different architectures to find the most suitable one for your task.

Experimenting with different architectures is crucial in the realm of AI and deep learning, as it allows developers to discover the most effective model for a specific task. Each task may require a unique approach, and by testing various neural network structures—such as convolutional networks for image processing or recurrent networks for sequence prediction—developers can optimize performance and accuracy. This iterative process involves adjusting parameters, layer configurations, and activation functions to better capture the complexities of the data. Ultimately, exploring diverse architectures not only enhances model efficiency but also provides deeper insights into the underlying patterns within the dataset, leading to more robust AI solutions.

Be mindful of overfitting and use techniques like dropout and regularization to combat it.

In the realm of AI and deep learning, overfitting is a common challenge that occurs when a model learns the training data too well, capturing noise and details that do not generalize to new, unseen data. This can lead to poor performance on real-world applications. To combat overfitting, it is essential to employ techniques like dropout and regularization. Dropout involves randomly dropping units from the neural network during training, which helps prevent the model from becoming too reliant on any specific neurons. Regularization techniques, such as L1 or L2 regularization, add a penalty term to the loss function to discourage overly complex models. These strategies help ensure that the model maintains its ability to generalize beyond the training dataset, leading to more robust and reliable AI systems.

Stay updated with the latest research and advancements in AI and deep learning.

Staying updated with the latest research and advancements in AI and deep learning is crucial for anyone involved in these rapidly evolving fields. As technology progresses, new techniques, algorithms, and applications are continually being developed, which can significantly impact existing practices and open up new possibilities. By keeping abreast of current trends through academic journals, conferences, and online platforms, professionals can ensure they are leveraging the most effective tools and methodologies. This not only enhances their ability to innovate but also helps them remain competitive in an industry that thrives on cutting-edge knowledge. Engaging with the latest findings also fosters a deeper understanding of potential ethical implications and societal impacts, enabling more responsible development and deployment of AI technologies.