Understanding NLP Data: The Backbone of Natural Language Processing

In the age of digital communication, the ability to understand and process human language is more crucial than ever. This is where Natural Language Processing (NLP) comes into play. At its core, NLP is a field of artificial intelligence that focuses on the interaction between computers and humans through natural language. However, what truly powers NLP technologies is the data behind them.

What is NLP Data?

NLP data refers to the vast amounts of textual information used to train machine learning models in understanding and generating human language. This data can come from various sources such as books, websites, social media platforms, and more. It includes everything from structured datasets like dictionaries and ontologies to unstructured text like emails or chat logs.

The Types of NLP Data

NLP data can be categorized into several types:

- Text Corpora: Large collections of text used for training models. These can be general-purpose or domain-specific.

- Annotated Datasets: Text data that has been labeled with specific tags for tasks such as sentiment analysis or named entity recognition.

- Lexical Resources: Databases containing information about words and their meanings, synonyms, antonyms, etc.

- Syntactic Resources: Resources that provide information about grammatical structures in a language.

- Semantic Resources: Datasets that help understand meanings and relationships between words beyond their literal interpretation.

The Importance of Quality Data

The effectiveness of any NLP model heavily relies on the quality and quantity of data it is trained on. High-quality data ensures that the model can accurately capture linguistic nuances and provide reliable outputs. Poor-quality or biased data can lead to inaccurate models that might perpetuate stereotypes or misunderstandings in language processing tasks.

Challenges in Collecting NLP Data

While there is an abundance of textual data available online, collecting relevant and high-quality datasets poses several challenges:

- Diversity: Ensuring datasets represent a wide range of languages, dialects, and cultural contexts.

- Anonymization: Protecting personal information while using real-world text sources for training purposes.

- Bias Mitigation: Identifying and reducing biases present in training datasets to create fairer models.

- Linguistic Complexity: Handling idiomatic expressions, sarcasm, slang, and other complex linguistic features.

The Future of NLP Data

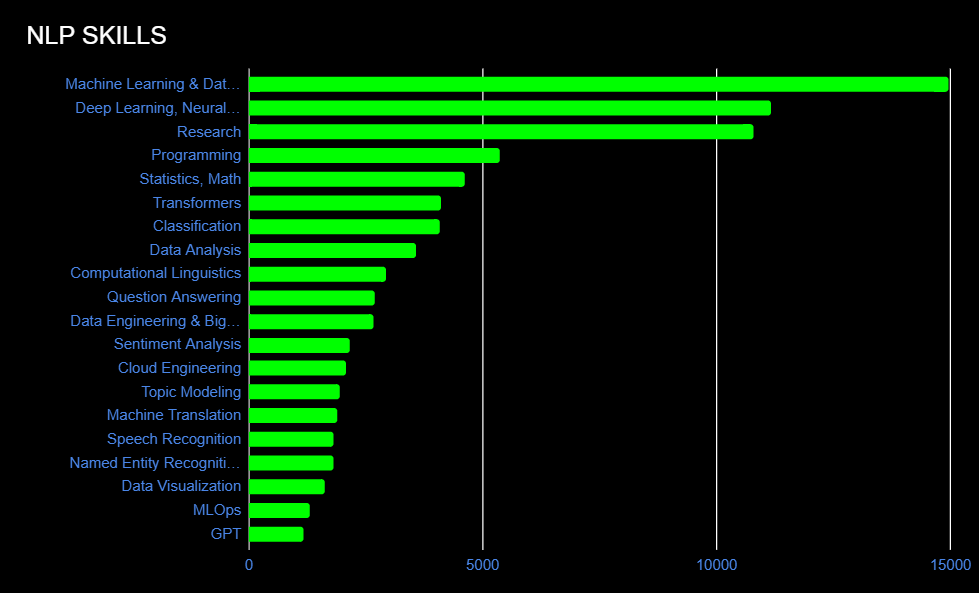

The field of NLP continues to evolve rapidly with advancements in AI technologies like deep learning and transformer models. As these technologies progress, so does the need for more sophisticated datasets capable of capturing complex linguistic phenomena across diverse languages and contexts. Researchers are continually working on creating multilingual corpora as well as developing new methods for better annotating existing datasets.

In conclusion

Understanding how pivotal nlp_data truly shapes future innovations within this exciting domain ultimately leads towards improved communication between humans machines alike bridging gaps once thought insurmountable.

Top 5 Frequently Asked Questions About Natural Language Processing (NLP) Data

- What are the types of data used for NLP?

- Is ChatGPT llm or NLP?

- What is NLP with an example?

- What is NLP used for?

- What does NLP stand for?

What are the types of data used for NLP?

In the realm of Natural Language Processing (NLP), various types of data play a crucial role in training and enhancing language models. The types of data commonly used for NLP tasks include text corpora, annotated datasets, lexical resources, syntactic resources, and semantic resources. Text corpora consist of large collections of text used for training models, while annotated datasets involve text labeled with specific tags for tasks like sentiment analysis. Lexical resources provide information about word meanings and relationships, syntactic resources focus on grammatical structures, and semantic resources help understand deeper meanings and word relationships. Each type of data serves a unique purpose in enabling NLP systems to comprehend and generate human language effectively.

Is ChatGPT llm or NLP?

ChatGPT is an example of both a large language model (LLM) and a natural language processing (NLP) application. As an LLM, ChatGPT leverages extensive datasets and sophisticated algorithms to generate human-like text based on the input it receives. This capability is a direct result of advancements in NLP, which is the broader field focused on enabling computers to understand, interpret, and produce human language. While NLP encompasses various tasks such as sentiment analysis, translation, and entity recognition, LLMs like ChatGPT specifically focus on understanding context and generating coherent responses in natural language conversations. Therefore, ChatGPT serves as a practical application of NLP principles through its use as a conversational agent.

What is NLP with an example?

Natural Language Processing (NLP) is a branch of artificial intelligence that focuses on enabling computers to understand, interpret, and generate human language in a way that is both meaningful and contextually relevant. One example of NLP in action is chatbots. Chatbots are computer programs designed to simulate conversation with human users, providing automated responses based on the input they receive. These chatbots use NLP algorithms to analyze and process the user’s messages, understand the intent behind them, and generate appropriate replies in natural language. By leveraging NLP techniques, chatbots can engage in seamless interactions with users, offering assistance, answering questions, or providing information in a conversational manner.

What is NLP used for?

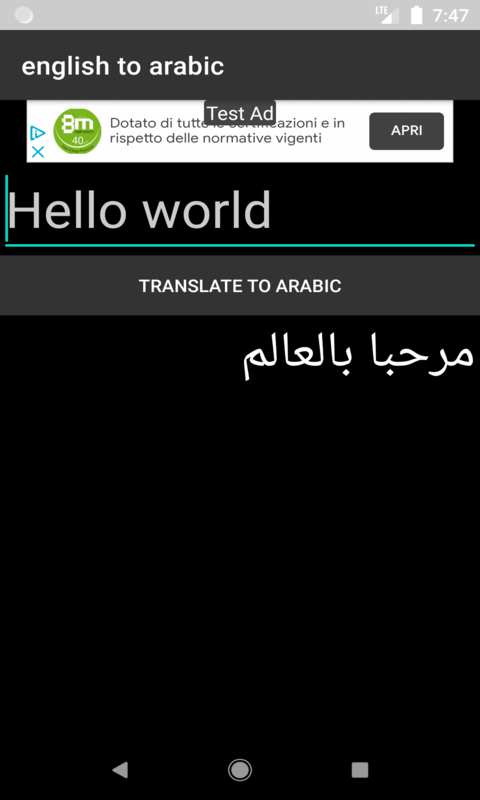

Natural Language Processing (NLP) is a versatile field of artificial intelligence that is used for a wide range of applications. NLP technology enables computers to understand, interpret, and generate human language, allowing for seamless interaction between humans and machines. NLP is commonly used for tasks such as sentiment analysis, language translation, chatbots, speech recognition, text summarization, and information extraction. Its applications span across various industries including healthcare, finance, customer service, marketing, and more. By harnessing the power of NLP, businesses can automate processes, gain valuable insights from textual data, enhance user experiences, and improve overall efficiency in communication and decision-making.

What does NLP stand for?

NLP stands for Natural Language Processing. It is a branch of artificial intelligence that focuses on the interaction between computers and humans through natural language. NLP enables computers to understand, interpret, and generate human language in a way that is both meaningful and contextually relevant. By leveraging algorithms, linguistic rules, and large datasets, NLP technologies empower machines to perform tasks such as language translation, sentiment analysis, speech recognition, and text generation. The application of NLP has revolutionized various industries, including healthcare, finance, customer service, and more, by enhancing communication efficiency and enabling seamless interactions between humans and machines.