The Power of Deep Learning in Natural Language Processing

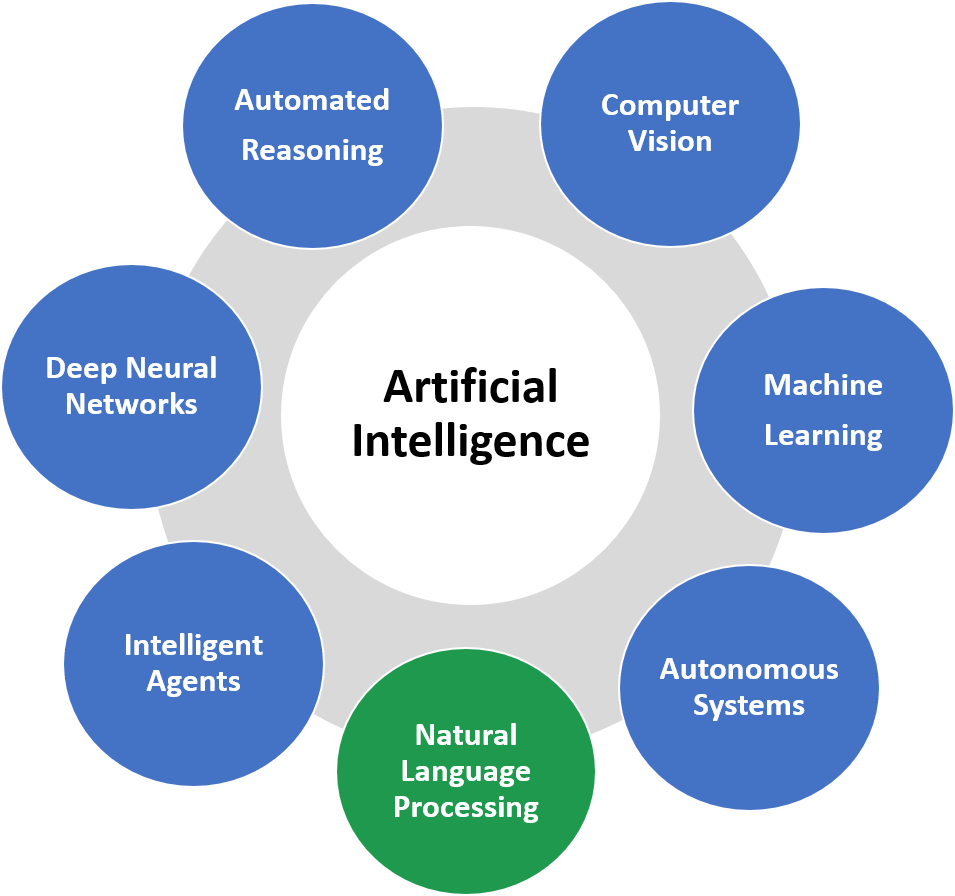

Natural Language Processing (NLP) is a branch of artificial intelligence that focuses on the interaction between computers and human language. Deep learning, a subset of machine learning, has revolutionized the field of NLP by enabling computers to understand, interpret, and generate human language in a more sophisticated and nuanced way.

Deep learning algorithms, particularly neural networks, have shown remarkable success in various NLP tasks such as machine translation, sentiment analysis, text generation, and speech recognition. These algorithms are designed to mimic the way the human brain processes information through interconnected layers of artificial neurons.

One of the key advantages of deep learning in NLP is its ability to automatically learn features from raw data without the need for manual feature extraction. This allows deep learning models to capture complex patterns and relationships in language data, leading to more accurate and robust NLP systems.

For example, in machine translation tasks, deep learning models can learn to translate between languages by analyzing large amounts of parallel text data. By processing this data through multiple layers of neural networks, these models can generate translations that are not only grammatically correct but also contextually accurate.

In sentiment analysis applications, deep learning algorithms can classify text based on the underlying sentiment expressed by the author. By training on labeled datasets containing positive and negative sentiment examples, these models can learn to identify emotions and opinions in text with high accuracy.

Overall, deep learning has significantly advanced the capabilities of NLP systems and paved the way for more sophisticated language technologies. As researchers continue to explore new architectures and techniques in deep learning for NLP, we can expect further breakthroughs that will continue to enhance our ability to interact with machines using natural language.

5 Essential Tips for Enhancing NLP with Deep Learning Techniques

- Preprocess text data by tokenizing, lowercasing, and removing stopwords to improve model performance.

- Utilize word embeddings like Word2Vec or GloVe to represent words as dense vectors and capture semantic relationships.

- Experiment with different neural network architectures such as RNNs, LSTMs, or Transformers for NLP tasks.

- Regularize your deep learning models with techniques like dropout and batch normalization to prevent overfitting.

- Fine-tune pre-trained language models like BERT or GPT for specific NLP tasks to leverage their learned representations.

Preprocess text data by tokenizing, lowercasing, and removing stopwords to improve model performance.

To enhance the performance of deep learning models for natural language processing, it is crucial to preprocess text data effectively. One key tip is to tokenize the text, which involves breaking it down into individual words or tokens. Lowercasing all tokens can help standardize the text and reduce the complexity of the vocabulary. Additionally, removing stopwords – common words like “and,” “the,” and “is” that do not carry significant meaning – can improve the model’s focus on relevant content. By implementing these preprocessing steps, the model can better understand and analyze the text data, leading to improved performance in various NLP tasks.

Utilize word embeddings like Word2Vec or GloVe to represent words as dense vectors and capture semantic relationships.

By utilizing word embeddings such as Word2Vec or GloVe in deep learning for natural language processing, words are represented as dense vectors that capture semantic relationships. These embeddings enable algorithms to understand the context and meaning of words based on their usage in a given text, allowing for more accurate and nuanced language processing. The use of word embeddings enhances the performance of NLP models by providing a way to encode semantic information into numerical representations, ultimately improving tasks like text classification, sentiment analysis, and machine translation.

Experiment with different neural network architectures such as RNNs, LSTMs, or Transformers for NLP tasks.

To optimize performance in natural language processing tasks, it is recommended to explore a variety of neural network architectures, including recurrent neural networks (RNNs), long short-term memory networks (LSTMs), and Transformers. Each architecture offers unique advantages and capabilities that can enhance the efficiency and accuracy of NLP models. By experimenting with different architectures, researchers and developers can identify the most suitable framework for specific tasks, leading to improved results in language understanding, translation, sentiment analysis, and other NLP applications.

Regularize your deep learning models with techniques like dropout and batch normalization to prevent overfitting.

To enhance the performance and generalization of your deep learning models in natural language processing, it is crucial to implement regularization techniques such as dropout and batch normalization. These methods help prevent overfitting by introducing noise during training and stabilizing the learning process. Dropout randomly deactivates a portion of neurons during each training iteration, forcing the model to rely on different pathways and reducing its reliance on specific features. On the other hand, batch normalization normalizes the input of each layer, improving the gradient flow and accelerating convergence. By incorporating these regularization techniques into your deep learning models, you can improve their robustness and efficiency in handling complex language data.

Fine-tune pre-trained language models like BERT or GPT for specific NLP tasks to leverage their learned representations.

To maximize the effectiveness of deep learning for natural language processing, a valuable tip is to fine-tune pre-trained language models such as BERT or GPT for specific NLP tasks. By leveraging the rich representations learned by these models during pre-training on vast amounts of text data, fine-tuning allows for more efficient adaptation to the nuances of particular tasks. This approach not only enhances the performance of the models but also reduces the need for extensive training data, making it a powerful strategy in optimizing NLP systems for various applications.